Verizon AI Connect Explained: Architecture, Use Cases, and AI Infrastructure Strategy for Enterprise Networks

Introduction: Why AI Is Forcing a Rethink of Enterprise Networks

Most enterprise infrastructure strategies still treat the network as plumbing—something that connects applications rather than something that defines their performance.

That model is breaking.

As organizations deploy large language models, real-time inference systems, and multimodal AI pipelines, they are encountering constraints that are not rooted in software or compute. They are running into the limits of latency, bandwidth, and data movement economics.

This is the context in which Verizon AI Connect should be understood.

It is not a traditional connectivity product. It is an attempt to transform the network into a deterministic, programmable substrate for distributed AI systems—one that spans private 5G, metro edge compute, and high-density AI data centers.

For technical decision makers, the question is no longer whether AI will scale. It is whether the underlying network architecture can support it efficiently and predictably.

What Is Verizon AI Connect?

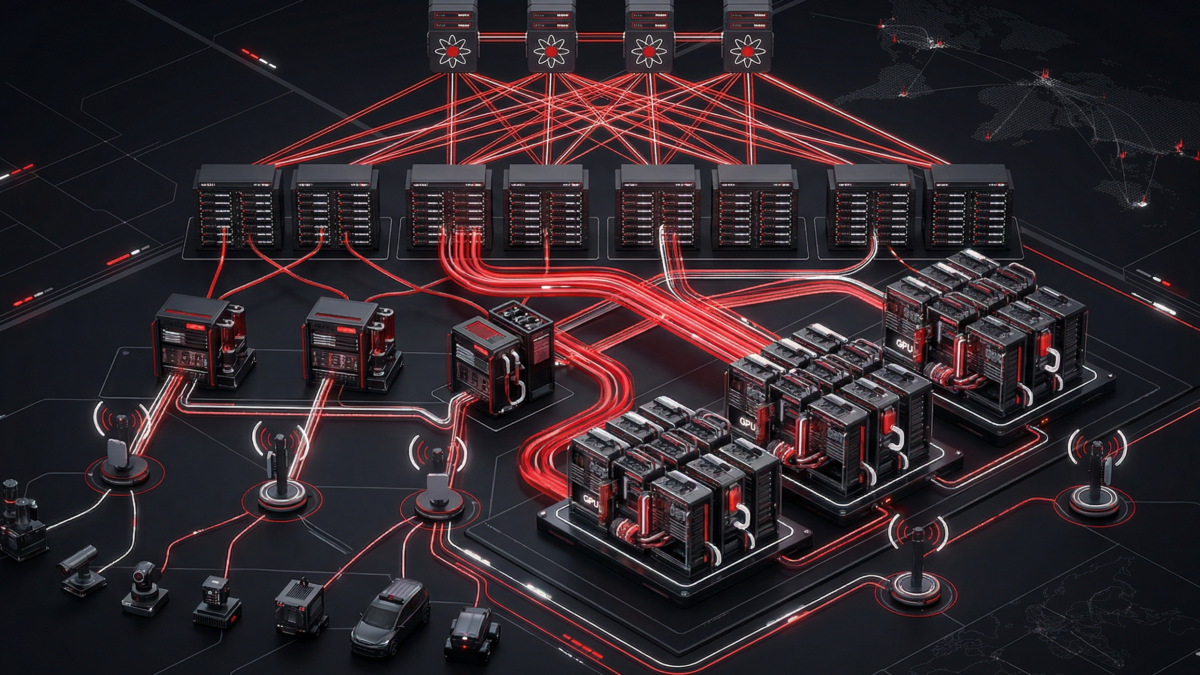

At a high level, Verizon AI Connect is a converged platform that integrates network transport, edge compute, and GPU infrastructure into a unified architecture designed for AI workloads.

Where traditional WAN services prioritize reach and resilience, AI Connect is designed around a different set of requirements: minimizing latency variability, maximizing throughput for east-west traffic, and enabling compute to be placed as close to data as possible.

The platform spans three tightly coupled domains. At the edge, private 5G and on-premise compute environments support ultra-low-latency control loops. At the metro layer, multi-access edge compute (MEC) environments place GPU resources one network hop away from users and devices. At the core, high-density AI hubs support large-scale training and fine-tuning workloads.

What makes this architecture distinct is that the network is no longer passive. It becomes an active participant in AI execution, capable of enforcing quality-of-service policies, dynamically allocating bandwidth, and integrating directly with compute workflows.

For a deeper, practitioner-level perspective, we recommend this interview with Michael Raj of Verizon on the Macro AI Podcast, a leading podcast that helps business leaders stay up-to-date on how AI is reshaping the business landscape. In the episode, the hosts discuss how Verizon AI Connect is being deployed in real enterprise environments. The conversation goes beyond architecture into how organizations are actually leveraging private 5G, edge compute, and network intelligence to operationalize AI at scale. You can listen to the full episode here: https://www.buzzsprout.com/2454256/episodes/17477146-verizon-ai-discussion-with-michael-raj-of-verizon

The Core Constraint: AI Is a Distributed Systems Problem

To understand why Verizon AI Connect exists, it is helpful to frame modern AI as a distributed systems challenge governed by physics.

Training large models requires constant synchronization of gradients across GPU clusters. As compute density increases, the bottleneck shifts from processing power to memory bandwidth and network transport. GPUs frequently sit idle waiting for data to arrive, a phenomenon often described as the “memory wall.”

At the same time, inference workloads are increasingly real-time. Whether it is a robotic system, a fraud detection engine, or an AI-powered customer interaction, the acceptable latency window is often measured in milliseconds. The speed of light, network congestion, and routing inefficiencies all become material constraints.

Traditional enterprise networks were never designed for this. They were built for transactional workloads, not for continuous, high-volume, latency-sensitive data exchange.

Verizon AI Connect is fundamentally an attempt to close that gap by aligning network architecture with the requirements of distributed AI.

Architecture Deep Dive: How Verizon AI Connect Works

Optical Foundation: OneFiber and AI-Scale Transport

At the base of the architecture is Verizon’s OneFiber initiative, a high-capacity optical backbone designed to handle massive data flows. Using advanced coherent optics and dense wavelength division multiplexing, the network can support throughput in the range of hundreds of gigabits to over a terabit per wavelength.

This level of capacity is not simply about scale. It is about enabling efficient east-west traffic between distributed compute environments. In AI training scenarios, where large datasets and model parameters must move continuously between nodes, reducing congestion and latency variation becomes critical.

Equally important is the evolution of metro network design. Rather than relying on traditional hub-and-spoke topologies, Verizon’s architecture increasingly resembles the spine-leaf designs used inside hyperscale data centers. This reduces hop count and helps control jitter, which is essential for maintaining consistent training performance across distributed clusters.

Transport Innovation: Moving Beyond TCP/IP

Standard TCP/IP networking introduces inefficiencies that become pronounced at AI scale. CPU overhead, congestion control behavior, and packet retransmission all contribute to latency and reduced throughput.

To address this, Verizon AI Connect incorporates technologies such as RDMA over Converged Ethernet (RoCEv2), which allows data to move directly between GPU memory spaces without passing through the CPU or operating system kernel. This approach significantly reduces latency and improves overall system efficiency.

In parallel, the platform leverages capabilities inherent in 5G standalone architecture to enforce deterministic quality of service. Network slicing allows specific workloads—such as real-time inference—to receive guaranteed bandwidth and latency characteristics, even when the network is under load.

The result is a transport layer that behaves less like a best-effort network and more like a predictable extension of the compute fabric.

Compute Placement: From Edge to Core

One of the defining principles of Verizon AI Connect is that compute must move closer to data.

At the far edge, compute resources are deployed directly within enterprise environments, often connected via private 5G. This enables sub-5 millisecond response times for use cases such as industrial automation and robotics.

At the near edge, MEC environments host GPU resources within Verizon’s metro infrastructure. By placing compute one hop away from the radio access network, the platform eliminates the latency penalties associated with backhauling data to centralized cloud regions. Clients can leverage integrations such as AWS Wavelength to optimize edge designs as well as proximity to other Tier 1 ISPs.

At the core, Verizon is building AI-ready data centers designed to support the extreme power densities of modern GPU clusters. These facilities incorporate liquid cooling and high-capacity power infrastructure to accommodate next-generation hardware, which can exceed 100 kilowatts per rack.

This tiered approach allows organizations to align workload placement with latency requirements, rather than forcing all AI processing into a single environment.

The Economics of AI Connect: Beyond Connectivity

For CIOs, the value of Verizon AI Connect extends beyond performance. It directly impacts the economics of AI deployment.

One of the most significant cost drivers in AI is data movement. Large datasets create what is often referred to as “data gravity,” making them expensive and complex to relocate. Hyperscale cloud providers frequently monetize this through egress fees, which can become substantial at scale.

By leveraging Verizon’s private backbone and direct interconnect capabilities, organizations can move data between environments without traversing the public internet. This reduces exposure to variable egress costs and introduces more predictable pricing models.

At the same time, the platform supports a shift from capital-intensive infrastructure investments to consumption-based models. Rather than building and maintaining GPU clusters that may become obsolete within a few years, enterprises can access GPU resources on demand, scaling capacity up or down as needed.

This model often results in lower total cost of ownership, particularly when workloads are variable or rapidly evolving.

Real-World Use Cases

Use case: Industrial Automation and Real-Time AI

In manufacturing environments, latency is not a theoretical concern. It directly impacts production quality and throughput.

Consider a semiconductor fabrication line where high-resolution cameras inspect wafers for defects. The time between image capture, AI inference, and robotic response must be measured in milliseconds. Any delay can result in defects passing undetected or production slowdowns.

With Verizon AI Connect, data can be ingested over private 5G, processed locally or at the near edge, and acted upon immediately. Only relevant data is sent upstream for model retraining, ensuring that production networks remain uncongested.

Use Case: Healthcare and Federated Learning

Healthcare presents a different challenge. Regulatory constraints often prevent patient data from leaving the facility where it was generated.

AI Connect enables federated learning architectures in which models are trained locally within each hospital environment. Only the learned parameters are transmitted across the network for aggregation, allowing the global model to improve without exposing sensitive data.

This approach aligns with both privacy requirements and performance needs, as it minimizes data movement while enabling collaboration across institutions.

Use Case: Financial Services and Network-Level Intelligence

In financial services, the rise of AI-driven fraud has exposed weaknesses in traditional authentication methods.

Verizon AI Connect introduces the ability to leverage network-level signals, such as SIM integrity and device identity, as part of the verification process. These checks occur within the network itself, adding a layer of security that is difficult for attackers to replicate.

Because this intelligence is embedded in the network path, it can be applied without introducing additional latency to the customer experience.

When Verizon AI Connect Makes Strategic Sense

Not every organization requires this level of architectural sophistication. However, for enterprises operating at scale, certain patterns consistently indicate strong alignment.

Organizations that rely on real-time AI, operate across multiple locations, or face significant data movement costs are particularly well positioned to benefit. Similarly, environments with strict regulatory requirements or high-performance industrial workloads often require the deterministic behavior that AI Connect is designed to provide.

Conversely, smaller deployments or purely cloud-native applications with minimal latency sensitivity may not justify the complexity of a converged network and compute architecture.

The Role of Macronet Services

Macronet Services approaches Verizon AI Connect from a position of vendor neutrality.

Rather than leading with a specific provider, we begin by modeling the technical and economic characteristics of the workload. This includes analyzing latency requirements, data movement patterns, and cost structures across different architectural options.

When Verizon AI Connect aligns with those requirements, we bring Verizon Business into the conversation as part of a broader solution strategy. The goal is not to sell a product, but to ensure that the infrastructure supporting AI initiatives is aligned with both technical realities and business objectives. Please connect with us at Macronet Services anytime for a conversation about your objectives and how we may be able to help you.

Final Perspective: The Network Is Now Part of the Model

The most important shift for technical leaders is conceptual.

AI is no longer something that runs on top of infrastructure. It is something that is deeply intertwined with it.

The performance of a model is now influenced as much by network design as it is by algorithm selection. Latency, throughput, and data locality are no longer secondary considerations—they are core architectural variables.

Verizon AI Connect represents a move toward a future where the network is not just a transport layer, but an integral component of the AI system itself.

For CIOs and Chief AI Officers, the strategic question is evolving:

It is no longer simply where to run models.

It is how to design an infrastructure where network, compute, and AI operate as a single, coordinated system.

Frequently Asked Questions

- What is Verizon AI Connect and how does it work?

Verizon AI Connect is a converged network and compute platform designed to support enterprise AI workloads across edge, metro, and core environments. It combines high-capacity fiber, private 5G, edge computing (MEC), and GPU infrastructure to create a deterministic, low-latency network fabric optimized for distributed AI training and real-time inference.

- How is Verizon AI Connect different from traditional WAN or SD-WAN solutions?

Traditional WAN and SD-WAN architectures are designed for transactional application traffic and best-effort delivery. Verizon AI Connect is purpose-built for AI workloads, enabling deterministic performance, ultra-low latency, and high-throughput east-west data movement. It integrates compute resources and network intelligence, transforming the network into an extension of the AI infrastructure.

- What types of AI workloads benefit most from Verizon AI Connect?

Verizon AI Connect is best suited for workloads that require low latency, high bandwidth, or distributed processing. This includes real-time AI inference (such as robotics or fraud detection), large-scale model training and fine-tuning, computer vision applications, and federated learning environments where data cannot be centralized.

- How does Verizon AI Connect support edge AI and real-time inferencing?

Verizon AI Connect supports edge AI through private 5G and Multi-Access Edge Compute (MEC), placing GPU resources close to end devices. This reduces latency to under 20 milliseconds and enables real-time processing for applications such as industrial automation, autonomous systems, and AI-powered customer interactions.

- What role does 5G network slicing play in Verizon AI Connect?

5G network slicing allows Verizon to create dedicated, isolated network segments with guaranteed bandwidth and latency characteristics. In Verizon AI Connect, this enables prioritization of critical AI workloads, ensuring consistent performance for latency-sensitive applications even during periods of network congestion.

- How does Verizon AI Connect reduce data egress costs from cloud providers?

By leveraging Verizon’s private backbone and direct interconnects, enterprises can move large datasets between locations and cloud environments without relying on the public internet. This reduces exposure to hyperscaler egress fees and allows organizations to better control and predict data transfer costs.

- Can Verizon AI Connect replace or complement hyperscale cloud providers?

Verizon AI Connect is not a replacement for hyperscalers but rather a complementary architecture. It enables hybrid and multi-cloud AI strategies by optimizing how data and workloads move between edge environments, private infrastructure, and public cloud platforms, improving performance and cost efficiency.

- What is the role of GPU-as-a-Service (GPUaaS) in Verizon AI Connect?

GPUaaS within Verizon AI Connect allows enterprises to access high-performance GPU clusters on demand without owning hardware. This enables elastic scaling for AI training and inference workloads, reduces capital expenditure, and eliminates the risk of hardware obsolescence in rapidly evolving AI environments.

- Is Verizon AI Connect suitable for regulated industries like healthcare and finance?

Yes, Verizon AI Connect is particularly well suited for regulated industries. It supports architectures such as federated learning, where sensitive data remains local while models are trained collaboratively. The platform also enables secure, private connectivity and network-level intelligence for compliance, privacy, and security requirements.

- When should an enterprise consider adopting Verizon AI Connect?

Enterprises should consider Verizon AI Connect when they have latency-sensitive AI workloads, large-scale data movement requirements, or distributed environments that require consistent performance. It is especially valuable for organizations deploying real-time AI, operating across multiple sites, or seeking to optimize the cost and performance of AI infrastructure.

Recent Posts

- What Is 6G? How 6G Will Transform the Wide Area Network (WAN) and Enterprise Connectivity

- Anthropic Mythos and Project Glasswing: Why AI Is Redefining Cybersecurity for Enterprise Leaders

- Dedicated Internet Access (DIA): What It Is, Pricing, Providers & Business Benefits (2026 Guide)

- Network Infrastructure Consulting in 2026: How AI, CX, IoT, and Modern WAN Strategy Are Reshaping Business Networks

- Data Center Colocation in Silicon Valley: Pricing, Connectivity, Power & Provider Guide

Archives

- April 2026

- March 2026

- February 2026

- January 2026

- December 2025

- October 2025

- September 2025

- August 2025

- July 2025

- June 2025

- May 2025

- April 2025

- March 2025

- February 2025

- January 2025

- December 2024

- November 2024

- October 2024

- September 2024

- August 2024

- July 2024

- June 2024

- May 2024

- April 2024

- March 2024

- February 2024

- January 2024

- December 2023

- November 2023

- October 2023

- September 2023

- August 2023

- July 2023

- June 2023

- May 2023

- April 2023

- March 2023

- February 2023

- January 2023

- December 2022

- November 2022

- October 2022

- September 2022

- August 2022

- July 2022

- June 2022

- May 2022

- April 2022

- March 2022

- February 2022

- January 2022

- December 2021

- November 2021

- October 2021

- September 2021

- August 2021

- July 2021

- June 2021

- May 2021

- April 2021

- March 2021

- December 2020

- September 2020

- August 2020

- July 2020

- June 2020

Categories

- Music (1)

- Dedicated Internet Access (1)

- data center colocation (8)

- multicloud (4)

- eSIM (1)

- IoT (2)

- Podcast (1)

- consulting (13)

- Telecom Expense Management (5)

- Satellite (2)

- Artificial Intelligence (27)

- Travel (1)

- Sports (1)

- Uncategorized (1)

- News (303)

- Design (12)

- Clients (12)

- All (19)

- Tips & tricks (25)

- Inspiration (9)

- Client story (1)

- Unified Communications (200)

- Wide Area Network (329)

- Cloud SaaS (66)

- Security Services (74)